Why Karo is GDPR compliant by default

When a customer starts a conversation with a chatbot on your booking page, they are not thinking about data protection legislation. They want to know whether their tickets can be exchanged.

But somewhere in your organisation, someone is asking a different question: what happens to that customer’s personal data when it passes through an AI system?

Most AI products do not answer it clearly. If that question has held your venue back from deploying an AI assistant – this is for you.

What the data protection concern actually is

Most AI systems work by taking a customer’s message, combining it with whatever customer data the system has access to, and passing the whole package to an AI model for a response. If your ticketing system holds a customer’s name, email address, booking history, and accessibility requirements, a poorly designed integration may send all of that to the AI model – whether the model needs it or not.

The AI model is typically hosted by a third party. That means customer personal data – potentially including accessibility notes, bereavement requests, or concession details – transits cloud infrastructure that sits outside your direct control. From a data protection standpoint, this creates real obligations: data processing agreements, lawful basis assessments, records of processing activities. From a practical standpoint, it creates risk. Not all AI providers have data handling practices that sit comfortably with UK GDPR requirements.

This is not a fringe concern. It is the reason many venues have stalled on AI adoption entirely.

How Karo handles customer data differently

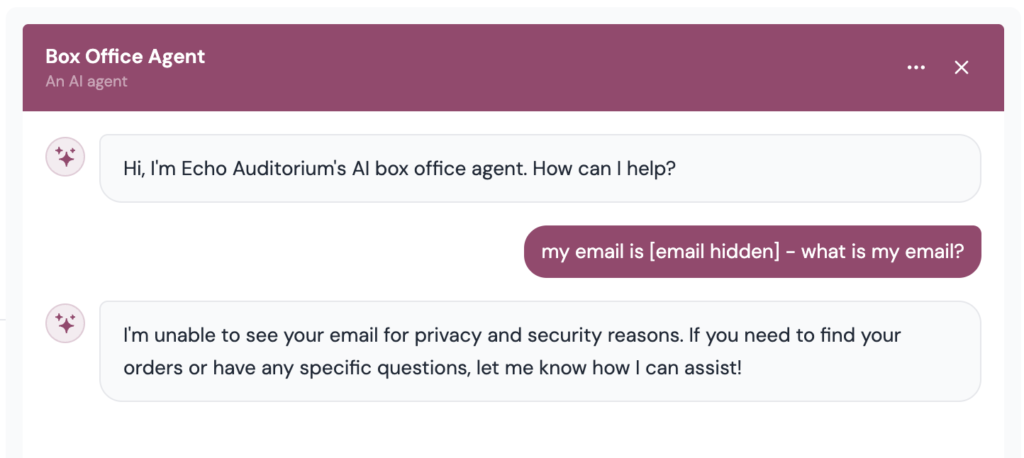

Karo does not send raw customer records to its underlying AI model. When a customer interacts with Karo, the system works from an anonymised representation of their account – a set of structured fields that allow Karo to answer their query accurately, without exposing the personal data that underpins it.

If a customer asks about their upcoming booking, Karo retrieves the relevant details through its connection to your ticketing system. It does not expose the customer’s full account information to the AI model. It extracts what is needed for the specific query, strips or replaces personally identifying fields, and operates on the result. The customer receives an accurate, helpful response and their personal data does not leave your systems in a form that identifies them.

This approach – anonymisation at the point of query, built into the system by default rather than added as an option – is what distinguishes Karo from other products that simply forward whatever context they can find.

What this means for your compliance position

Under UK GDPR, the central question for any AI deployment is whether personal data is being transferred to a third-party processor, and on what basis. For most chatbot tools, the answer is yes: personal data flows to the AI provider, and the venue needs a data processing agreement that holds up to scrutiny.

Karo’s architecture is designed to narrow the scope of that question. Because Karo operates on anonymised records, the AI model it relies on does not process personal data in the conventional sense. The obligations that arise from third-party data processing are significantly reduced. You still need to understand what you have deployed and how it works – no system removes compliance responsibilities entirely – but the exposure is different in kind, and the conversation with your data protection officer or legal team is a shorter one.

Karo’s data flows are documented clearly, and the anonymisation approach is consistent across all deployments. It does not depend on individual configuration choices made at installation.

How this compares to other products

Many chatbot platforms describe themselves as GDPR-compliant, or note that they offer data processing agreements. This is a lower bar than it might appear. A data processing agreement does not prevent personal data from passing to an AI provider – it simply establishes terms for how the provider handles it. The data still leaves your systems.

Karo’s approach is different in structure, not just in documentation. The personal data stays in your systems. The AI model operates on the output of the anonymisation layer. That distinction matters when you are explaining your AI deployment to trustees, funders, or customers who ask directly what happens to their information.

What changes when compliance is not the obstacle

Adopting AI tools in a venue context should not require months of legal review. For many organisations, the compliance question is the real blocker – not cost or capability, but the difficulty of answering clearly: what happens to our customers’ data?

Karo is built so that question has a clear answer from the start. The anonymisation is not a configuration option or an add-on. It is the default. Venues working with sensitive customer data can deploy Karo with confidence that the architecture itself is not creating new compliance exposure.

For customer-facing assistance that works reliably and handles data responsibly, book a conversation us here.